The AI Landscape: Writers' strike, AI mind-reading, and more

AI trends I'm reading about this week, and why your organization might want to be thinking about them too

So far, this newsletter has been focused on the potential of generative AI productivity tools to transform internal organizational workflows.

But mission-driven orgs will also be affected by how AI shapes the external world. Supply chain dynamics, the needs and behavior of your constituents, competitor positioning, the viability of your business model — all of these could change in response to emerging AI technology as well.

Here are some broader trends I’ve been thinking about this week:

The AI health care & accessibility revolutions

AI has already been a major force for better health care, from improving medical record-keeping to detecting cancer. Two new recent developments:

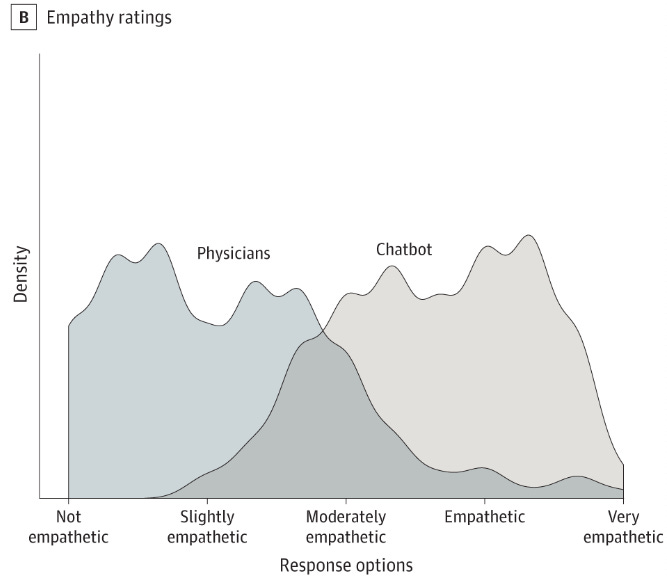

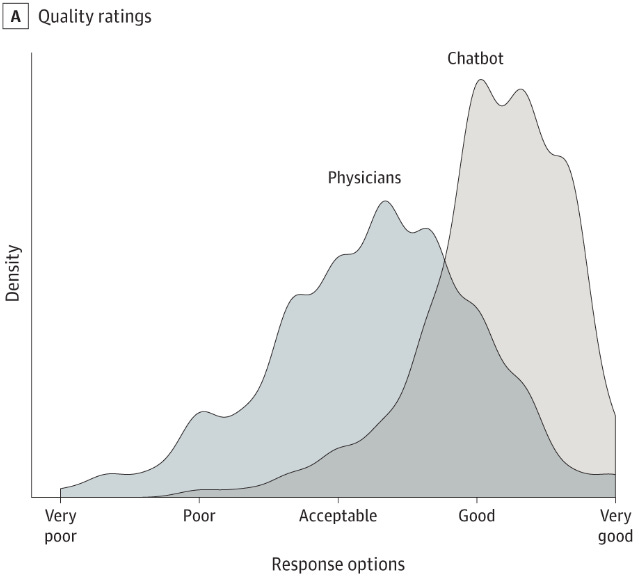

ChatGPT outshines physicians in quality and empathy for online patient queries. (Not that the bar was very high, apparently.) Yet another case where AI can cut health care costs while also improving quality. Key figures below; full paper here.

A.I. Is Getting Better at Mind-Reading. This certainly sounds creepy. But at the current level of this technology — which requires training a whole separate model on each individual person’s brain, and can be easily thrown off by the person whose mind is being read — it appears to have WAY more positive use cases than negative ones.

Both of these are also examples of a broader trend that I referred to as the “Inclusivity Opportunity” I called out on our AI Impact Lab launch webinar:

If AI is better at medical advice than doctors are in English, think how much more impactful it will be for people who aren’t fluent in the dominant tongue of their country’s health systems. And mind-reading technology could be literally life-changing for people who are paralyzed or have speech disabilities.

Food for thought: How can AI help make your organization more inclusive and close access gaps to your services?

Job automation and economic displacement

The displacement of jobs by generative AI may be a huge issue over the coming decade. From where I sit, there’s a lot of grey and not much black and white. For instance, I’m excited for AI to democratize access to the legal system, but that process is also going to really suck for a lot of young lawyers, who will be competing for a fast-shrinking pool of highly-paid jobs that can pay off their debt.

I’d argue that how we react to automation at a cultural and policy level will determine whether on balance it’s a good thing or a bad thing. But it’s not going to be easy. Here are some relevant pieces I’ve been thinking about this week:

TV and film writers are fighting to save their jobs from AI. They won’t be the last. The Hollywood writers strike probably deserves a whole post of its own here on AI for Good, but for now I’ll just say this: For a number of structural reasons, screenwriters represented by the Writers Guild of America are probably the single best-positioned of any threatened workforce to win serious concessions from their employers to protect their jobs from AI. So this may be a bellwether strike: If the WGA can’t win significant restrictions on the use of AI, then we should probably expect that no other workforce can either.

Deskilling on the job: A thought-provoking take on potential negative impacts of automation, from Danah Boyd. “When highly trained professionals now babysit machines, they lose their skills. Retaining skills requires practice. How do we ensure that those skills are not lost? If we expect humans to be able to take over from machines during crucial moments, those humans must retain strong skills.”

India’s 5 Million Coders Will Reckon With an AI Jobpocalypse: The internet allowed the United States to outsource millions of jobs to India (and a few other countries, like the Philippines). What these jobs — like customer service, coding, data entry, etc. — largely had in common was that they didn’t require physical presence, but did require fluency in English. That sounds an awful lot like… the skillset of a Large Language Model like ChatGPT. Which means that, however bad automation is for segments of the middle class here, it may be way worse for India.

Disinformation and other forms of false content

In a world of deep fake images, video and audio content, everyone is going to have a harder time distinguishing fact from fiction. A few examples:

I Cloned Myself With AI. She Fooled My Bank and My Family. Following my post about how mission-driven organizations must guard against AI-powered phishing scams, a Wall Street Journal columnist tests out the technology herself.

AI Chatbots Have Been Used to Create Dozens of News Content Farms. This is the real “fake news” — and my guess is that this report is only scratching the tip of the iceberg.

Why ChatGPT lies in some languages more than others. Humans can use LLMs (Large Language Models) to intentionally produce false content. But the LLMs themselves also “hallucinate” — i.e., present false facts as if they were true. And a new study shows that the problem is worse in some languages than others. This article does a great job of explaining why: “[ChatGPT’s] answer isn’t really an answer, it’s a prediction of how that question would be answered, if it was present in the training set.” One example of the implications: Since political speech is so heavily censored in China, ChatGPT will answer political questions in Chinese differently from in English — in the direction of what the Chinese government would want. This doesn’t mean you can’t use ChatGPT in multiple languages — it is just another example of how you need to educate yourself and your teams on how LLMs work and where their pitfalls lie.

Panicked yet? Don’t be! No one has all the answers for how to prevent an AI-powered disinformation tsunami. But this webinar from WITNESS, a non-profit that uses video and technology to protect and defend human rights, discusses some possible solutions.

Strategic AI landscape analysis

AI for Good is the newsletter of AI Impact Lab, where our job is to help mission-driven organizations harness AI to achieve greater impact.

One of the services that I offer at AI Impact Lab is strategic AI landscape analysis. In this process, I work with a client to evaluate relevant AI industry trends, how competitive forces and stakeholder behavior might shift in response to AI, how AI could affect the broader political, economic, and cultural landscape, and more. Then we identify opportunities, threats, and potential strategic directions for the client organization in response.

If you’re interested in hiring AI Impact Lab for strategic landscape analysis, or learning about the other ways we can help your mission-driven organization adapt to the fast-changing landscape of generative AI, please be in touch: